There is a peculiar modern moment that only exists because our machines now carry opinions. You replace a camera module, the phone powers on, the image looks fine, and then a warning appears anyway, not because anything is broken, but because the device has decided the part is illegitimate. Somewhere in the circuitry, a quiet rule has been violated. The hardware is present, the electrons do their jobs, yet the product behaves as if reality must be verified by paperwork.

That shift is not about screws, glue, or even difficulty. It is about authority. The most important change in consumer technology over the last decade is not the camera bump, the glass finish, or the processor race. It is the new dependency between physical objects and remote permission. Parts pairing, component authentication, software-locked features, and calibration gates are turning everyday devices into systems that can refuse service, not on technical grounds, but on policy.

If you want to understand where technology is going, stop looking at flashy launches. Look at what happens when something breaks.

Parts Pairing Is the Invisible Contract Inside Your Hardware

Parts pairing is the practice of binding a component to a specific device identity, typically through cryptographic checks, serialized pairing data, or calibration values that the device expects to match. When the expected match fails, the device may warn the user, disable a feature, degrade performance, or block certain functions entirely. Sometimes it is framed as an integrity check, sometimes as “for your safety,” and sometimes it is not explained at all.

In older electronics, parts were physical. A replacement screen was a replacement screen. A battery was a battery. Repair was mostly a question of mechanical skill and access to components. Now, parts increasingly carry a relationship status. They are not merely compatible, they are recognized. Recognition can be local, mediated by an internal secure element, or it can depend on proprietary tools that write pairing data. Either way, the device becomes a gatekeeper of its own maintenance.

That gatekeeping matters because it changes the meaning of interchangeability. Interchangeability used to be an engineering virtue. In a world of paired parts, interchangeability is often treated as a threat vector, an inconvenience, or a revenue leak. Repair becomes a permissioned act.

The Security Argument Is Not Fiction, It Is Incomplete

The strongest defense of parts pairing is security. If a malicious actor can swap a component, they could potentially introduce spyware, bypass biometric sensors, or compromise the integrity of the device. A counterfeit battery could overheat. A third-party face recognition module could be engineered to spoof. A replaced USB controller could become a hardware implant. These are not paranoid fantasies. Hardware attacks exist, and the more valuable a device becomes as a personal vault, the more tempting it is to tamper with it.

A world without any component verification is not automatically a safer world. The question is not whether verification can be useful. The question is what kind of verification is justified, how it is implemented, and who controls the remedy when verification fails. Security becomes a convenient explanation when it is used to justify restrictions that exceed what security needs.

If a device warns that a part is unrecognized, that can be defensible as a transparency measure. If the device disables essential functions even when the replacement is high quality and properly installed, the security rationale begins to look like an excuse for control. The difference between those two is not philosophical. It is practical. It determines whether repair remains a normal consumer right or becomes an act of supplication.

Calibration Is the New Monopoly Lever, Because It Looks Like Engineering

There is a second argument that often accompanies security: calibration. Modern components are not identical. Cameras, displays, batteries, and sensors vary slightly. High-quality performance may require calibration data that is tuned to the specific device and the specific part. A camera module might need lens shading correction, autofocus tuning, or color profiles. A display may need brightness mapping or True Tone behavior tied to sensor readings. A battery may need power management parameters.

Calibration is real engineering. It is also a perfect chokepoint.

When calibration tools are proprietary and restricted, the right to repair becomes the right to install a part that is physically compatible but permanently treated as second-class. Independent repair can restore function, but not full dignity. The device works, but it works with a scar. That scar can be used as a deterrent, a constant reminder that the “proper” path goes through authorized channels.

The subtlety here is important. Consumers generally do not object to calibration when it improves quality. They object to calibration being turned into a toll. When a company controls the calibration pipeline, it controls which repairs are considered legitimate. It controls the market for replacement parts without having to argue about parts at all. It can claim to be protecting quality while also protecting revenue.

When Ownership Becomes Conditional, Resale Becomes Fragile

Resale markets thrive on predictability. A used device is appealing when buyers can assume it will behave normally after purchase, and when repair is a straightforward way to keep it alive. Parts pairing threatens both assumptions.

Imagine a used phone with a replaced screen. The screen is excellent, the touch works, the brightness is strong. Yet the device shows a persistent alert about a “non-genuine” component. Buyers become suspicious, even when the replacement is perfectly fine. Suspicion reduces value. Reduced value shortens the effective lifespan of devices, not because hardware is dead, but because the market penalizes anything that looks like tampering.

This changes consumer behavior upstream. If repair lowers resale value, people repair less. If people repair less, more devices are discarded earlier. If more devices are discarded earlier, the environmental footprint expands. A policy choice in software becomes an ecological outcome in landfills and mining operations.

There is also a social dimension. People who rely on second-hand devices are often those most affected by repair barriers. If official service is expensive and independent service is punished by software warnings, the technology becomes less accessible to the people who need affordable options most. The device does not merely belong to someone. It belongs to someone who can afford the approved path.

The Independent Repair Shop Is Being Turned Into a Bureaucracy

Repair used to be a craft. Increasingly, it is an administrative job with a soldering iron.

Independent shops now face a world where replacing a part is only half the process. The other half is negotiating with pairing procedures, proprietary software tools, and serial-based authentication. Even when companies provide official programs, access can come with contracts, restrictions, reporting obligations, and tool costs that are difficult for small operators. The result is a two-tier repair economy: those who can participate in authorized ecosystems, and those who are pushed into a gray zone where function is possible but the device may punish the outcome.

This is not only about business. It is about knowledge transmission. In a traditional repair ecosystem, techniques spread. People learn, share, improve. In a permissioned repair ecosystem, knowledge becomes less transferable. Tools are gated, data is withheld, and experimentation becomes risky. The pool of skilled technicians shrinks over time, not because talent disappears, but because the system is designed to limit who can become competent.

And when repair knowledge shrinks, consumers lose a form of local resilience. A town with a good repair shop is a town where devices last longer, budgets stretch further, and outages are less catastrophic. Repair is quiet infrastructure. Weakening it has consequences that extend beyond convenience.

Counterfeit Parts Are a Problem, but Locking Everyone Is Not the Only Response

Counterfeit components exist, and some are dangerous. Low-grade batteries can swell or ignite. Poorly manufactured charging accessories can cause failures. Fake screens can have bad touch controllers or low brightness. The answer to counterfeits, though, does not have to be a system that treats every non-authorized part as hostile.

There are approaches that preserve safety without monopolizing repair. Transparency helps. Standards help. Clear labeling helps. The ability to verify part origin in a consumer-friendly way helps. A device can warn about unknown provenance while still allowing functionality. It can support calibration workflows that independent shops can access without punitive contracts. It can provide diagnostics that empower consumers instead of scaring them into submission.

When a system chooses the most restrictive route, it is making a statement about trust. It is declaring that the consumer cannot be trusted to make informed decisions, that independent technicians cannot be trusted to do competent work, and that the only acceptable maintenance is maintenance performed under corporate oversight. That statement might be convenient for security departments and financial models, but it is not the only defensible choice.

The Car Industry Is the Preview, Not the Exception

Technology rarely stays confined to phones. Patterns migrate.

Cars are already full of software locks, serialized modules, and features that can be enabled or disabled via remote authorization. Ownership is increasingly split between physical possession and digital entitlement. A replaced module may require dealer coding. A used car may lose features when accounts change hands. Subscription-based capabilities blur the line between buying a product and renting a set of permissions.

The logic behind parts pairing fits this trajectory perfectly. Once devices become networks of identity-bound components, the manufacturer’s relationship with the product does not end at the moment of sale. It persists through authentication, updates, and service workflows. The manufacturer becomes a long-term participant in your ownership, whether you invited that participation or not.

That persistence is not always negative. Updates can improve safety. Recalls can be handled more efficiently. Security vulnerabilities can be patched. Yet persistence also creates a channel for control, and control tends to expand to fill whatever channel exists.

The Right to Repair Is Not a Slogan, It Is a Definition of Citizenship in a Digital World

People often talk about the right to repair as a consumer issue, but it is deeper than that. Repair rights define whether a person is treated as a full participant in the technological environment or merely as a permitted user of corporate property.

If you own a device, you should be able to maintain it, understand it, and restore it to function without begging for permission. That does not mean every repair is safe or wise. It means the system should not be designed to punish autonomy by default. It means the risks of repair should be addressed through information, standards, and accountability, not through architecture that centralizes control.

There is also a democratic aspect. When products become unrepairable except through narrow channels, the market becomes less competitive. Prices rise, choice narrows, and consumers lose leverage. The ability to repair is one of the few mechanisms that keeps manufacturers honest over the long term. It forces them to build devices that can survive beyond warranty cycles. It forces them to respect the fact that people live with products longer than marketing campaigns do.

The New Scar Tissue of Modern Tech, Warnings, Pop-Ups, and Digital Shame

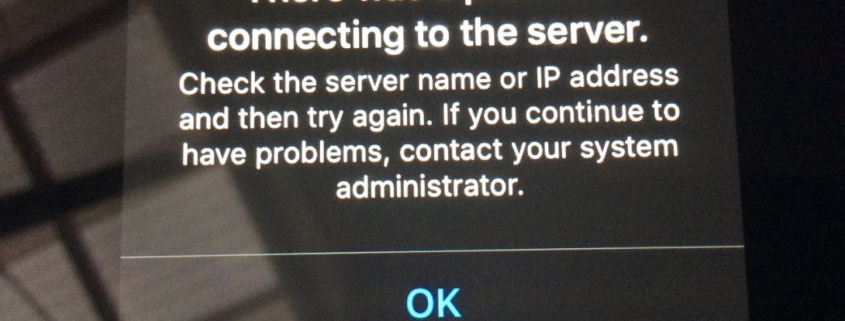

A small warning on a settings screen might seem trivial, but it carries social weight. Warnings are psychological instruments. They tell users what is legitimate and what is suspect. Over time, they shape norms. A generation grows up believing that a repaired device is inherently untrustworthy, even when the repair is expertly done. The warning becomes a quiet stigma.

This stigma matters because it changes what people feel comfortable buying, selling, and using. It turns repair into a moral category instead of a practical one. It creates a world where the “clean” device is the one that never needed human intervention, which is a strange ideal for physical objects that live in real pockets and real hands.

Digital shame is an underrated policy tool. It is softer than a lock, but it can be just as effective. You do not have to disable the phone if you can make repair feel like a mistake.

What Happens When Medical Devices Follow the Same Logic

Consumer tech is already heavy with permissions, but the most unsettling implications appear when you imagine the same logic applied to devices that keep people alive. Insulin pumps, CPAP machines, hearing aids, prosthetics, diagnostic tools, and home monitoring systems are increasingly software-defined and network-connected. They are already shaped by regulatory constraints, safety requirements, and manufacturer control. Parts pairing in this context can become a serious ethical question.

If a hearing aid’s microphone module fails, should replacement require manufacturer authorization? If a prosthetic’s sensor degrades, should a third-party technician be prevented from restoring function? If a medical device is out of warranty, should the patient be forced into expensive official channels even when qualified local repair exists?

Safety is crucial, but safety can coexist with repair rights if systems are designed for it. If they are not designed for it, safety becomes a justification for locking patients into dependency. The line between protection and captivity becomes thin.

The Future Is Not About Whether Devices Can Be Repaired, It Is About Who Gets to Decide

The technical story of parts pairing is simple. The cultural story is not. We are building a world where physical objects increasingly require digital permission to remain whole. That is a choice, not an inevitability. We can design systems that resist tampering while still honoring repair. We can build calibration workflows that protect quality without monopolizing access. We can treat owners like adults, capable of informed decisions, instead of treating them like temporary renters in denial.

The hardest part is that the battle is quiet. It does not arrive as a dramatic announcement. It arrives as a warning banner, a missing toggle, a feature that stops working after a repair, a tool that only “authorized providers” can use, a policy that sounds reasonable until it becomes the default structure of everyday life.

And once everyday life is structured around permission, the question stops being about a phone screen or a battery. It becomes a question about what ownership means in an era where objects can disagree with you.